The key to innovation isn’t finding more academic research; it’s learning to ruthlessly ignore 99% of it to find the signals that can actually survive in the market.

- Academic incentives (novelty) and industry needs (viability) are fundamentally misaligned, causing most research to be commercially useless.

- Lab results often fail in the real world due to “Context Collapse”—a predictable loss of effect when moving from a sterile environment to a complex one.

Recommendation: Adopt a “Commercial Viability Filter” from day one. Instead of asking “Is this research true?”, ask “Is the effect large enough to matter, and can it be reproduced cheaply enough to test?”

For R&D managers and entrepreneurs, the world of academic research presents a paradox. It’s a vast ocean of human knowledge, containing the potential seeds of breakthrough products and billion-dollar industries. Yet, it often feels like an impenetrable fortress, guarded by paywalls, jargon, and a culture that seems utterly disconnected from commercial realities. Many leaders are told to simply “read more papers” or “collaborate with universities,” but this advice often leads to wasted time and resources on ideas that were never destined to leave the lab.

The core issue is a misalignment of incentives. Academia rewards novelty and statistical significance, often on a microscopic scale. Industry, however, rewards market fit, scalability, and robust performance in messy, unpredictable real-world environments. This chasm explains why, as some research suggests, so many new products fail to find their footing. The common approach of trying to directly translate a single paper into a product is flawed from the start. It treats research as a set of instructions rather than what it truly is: a collection of weak signals in a sea of noise.

But what if the true skill wasn’t in navigating the entire ocean, but in building a better net? What if the key to leveraging academic findings was not to embrace it all, but to apply a ruthless, pragmatic filter to identify the rare ideas with genuine commercial potential? This isn’t about doing more R&D; it’s about de-risking innovation by understanding the inherent limitations of academic work and knowing precisely where to look for valuable signals.

This guide provides a pragmatic framework for the innovation broker. It will show you how to vet academic ideas for commercial robustness, how to access research intelligence legally and creatively, how to prototype breakthrough concepts for under $500, and how to make smart scaling decisions that align with market reality. It’s time to move from being a passive consumer of research to an active, strategic translator of science into value.

Summary: A Pragmatic Framework for Research-Driven Innovation

- Why do 90% of academic papers never lead to a marketable product?

- How to legally access expensive academic journals without a university subscription?

- Theory or Practice: Which should lead the development phase of a new product?

- The “P-hacking” trap that leads companies to make bad decisions based on faulty studies

- When to approach a university lab for collaboration: The optimal project maturity level

- Why does spending $10,000 on a mold only make sense if you sell over 1,000 units?

- How to build a functional smart textile prototype with a budget of under $500?

- How to Explain Complex Technical Concepts to Non-Experts in Under 2 Minutes?

Why do 90% of academic papers never lead to a marketable product?

The startling statistic that often circulates in innovation circles is that 95% of new products fail. While the real number is debated, the sentiment resonates because everyone has seen promising technologies die on the vine. The primary reason for this failure, especially for ideas born from academia, is a fundamental disconnect between academic goals and market demands. An academic paper’s goal is to prove a novel effect, even a tiny one, in a controlled environment. A product’s goal is to solve a real-world problem so effectively that people will pay for it.

This is where the concept of a Commercial Viability Filter becomes essential. Before investing a single dollar into development, you must translate the findings of a paper into the language of market risk. A statistically significant result in a lab does not equal a commercially significant advantage. Academics are incentivized to find something *new*, whereas industry needs something that is *better*, *cheaper*, or *faster* by a margin large enough to change customer behavior.

Applying this filter means you stop being impressed by the novelty of a finding and start asking tougher questions. Is the effect size meaningful? A 2% improvement in material strength is a great paper but a terrible product feature. Does the research show causation or just correlation? Many business decisions are wrongly based on confusing the two. Most importantly, does the sterile context of the lab resemble your chaotic market environment? A failure to rigorously vet research against these commercial realities is the number one reason academic “breakthroughs” become business write-offs.

How to legally access expensive academic journals without a university subscription?

One of the biggest frustrations for entrepreneurs is the academic paywall. How can you find the next big thing if it’s locked behind a $40 article fee? The secret is to stop thinking like a student trying to access a specific paper and start thinking like an intelligence analyst gathering signals from the entire ecosystem. The paper itself is often the last, most polished, and least useful part of the research process for a business leader.

The real value lies in the “para-academic” channels where research is discussed, debated, and contextualized. This includes researchers’ social media threads (especially on X/Twitter), where they often share findings and behind-the-scenes thoughts. Conference poster presentations, often available online, are visual summaries of work-in-progress. Lab websites and PhD student blogs can offer a glimpse into future research directions years before they are published. Furthermore, visual discovery tools like ResearchRabbit and Connected Papers can help you map entire fields and identify seminal or review articles that are often open access.

An even more creative approach is to look at where research is being applied. Patent databases like Google Patents are a goldmine; they show which companies are citing which foundational papers to protect their commercial products. This tells you which research is already considered commercially valuable. Finally, some organizations offer direct research access. For example, the YouTube Research Program provides academics with access to metadata, and in turn, the program’s participating institutions, like MIT and Stanford, publish research based on this data, creating a publicly accessible loop of insight.

Case Study: The YouTube Research Program

The YouTube Research Program grants academic researchers access to global video metadata via its Data API. This supports large-scale projects and fosters public research. The program accepts applications from qualified academics, with researchers from top institutions like Stanford’s Human-Computer Interaction Group and MIT’s Computer Science and Artificial Intelligence Laboratory actively monitoring and contributing to publications, creating a rich source of accessible, industry-relevant studies.

Theory or Practice: Which should lead the development phase of a new product?

The classic debate in innovation is whether to lead with theory (a robust, data-backed plan) or practice (getting your hands dirty and iterating). For R&D managers translating academic research, the answer is a dynamic and continuous loop between the two. Leading with theory alone results in products that work perfectly in a spreadsheet but fail in a customer’s hands. Leading with practice alone leads to endless tinkering without a clear strategic direction.

The most effective approach is to use theory to define a “search area” and then use rapid, practical experimentation to validate or invalidate the theory in the real world. Academic research is the map; it tells you where others have found treasure (or dragons). It should be used to form a hypothesis, such as “We believe this piezoelectric effect can be used to create a self-powering sensor.”

This is where hands-on practice takes over. The goal is not yet to build a product, but to create the cheapest, fastest possible experiment to test the core of the hypothesis. This could be a crude rig of materials, a simple piece of code, or a mock-up of a user experience. The results of this practical test then feed back to refine the theory. If the experiment fails, was the theory wrong, or was the experiment flawed? If it succeeds, can the effect be amplified? This iterative loop between theoretical models and functional prototypes is the engine of effective R&D.

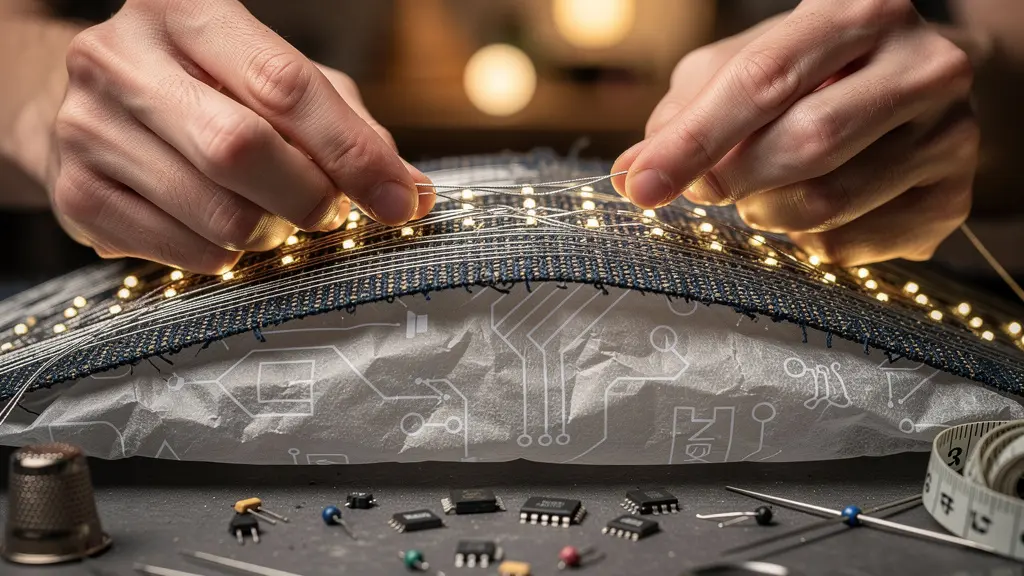

This image of hands weaving conductive threads, with circuit diagrams visible underneath, perfectly captures this synthesis. It’s the moment where the abstract theoretical model meets physical implementation. The scattered tools of both craft and technology signify the marriage of high-tech principles and hands-on creation. The product development journey isn’t linear from theory to practice; it’s a constant, messy, and creative oscillation between the two.

The “P-hacking” trap that leads companies to make bad decisions based on faulty studies

One of the most dangerous pitfalls when translating academic research is the implicit trust in “statistically significant” findings. This trust can lead companies down expensive rabbit holes, a risk amplified by a practice known as “p-hacking.” P-hacking (or data dredging) is the conscious or unconscious manipulation of data to produce a statistically significant result. It might involve running dozens of tests but only reporting the one that worked, or stopping data collection at a moment when the results look good. It’s a major reason for the “replication crisis” in science, and for businesses, it’s a source of catastrophic misdirection.

This is compounded by the “Context Collapse” phenomenon. Even when research is sound, its findings can evaporate when moved from the sterile, controlled environment of a lab to the messy, multi-sensory context of the real world. The effect of a specific color on purchasing decisions, for instance, might be proven in a lab with perfect lighting but completely disappear under the variable lighting of a retail store, where it competes with dozens of other sensory inputs. While the persistent myth of an 80% new product failure rate is an exaggeration, research published in the Journal of Product Innovation Management demonstrates that the actual new product failure rate is closer to 40% or less—a figure still high enough to warrant extreme caution.

As an innovation broker, your job is to be a professional skeptic. You must understand that a p-value is not a measure of truth or effect size. The table below illustrates how quickly the context collapse can erode or even negate promising lab results, turning a “sure thing” into a costly failure.

| Lab Environment | Real-World Creative Context | Impact on Results |

|---|---|---|

| Sterile, controlled conditions | Messy, multi-sensory environments | Effects reduced by 30-50% |

| Single variable testing | Multiple competing stimuli | Interactions override isolated effects |

| Neutral cultural context | Culturally-coded interpretations | Meaning shifts completely |

| Optimal lighting conditions | Variable store/gallery lighting | Color psychology effects negated |

To avoid this trap, never base a major decision on a single study. Look for meta-analyses and replication studies. And most importantly, run your own cheap, practical experiments to see if the effect survives contact with reality. This is the only true defense against making bad decisions based on seemingly good science.

When to approach a university lab for collaboration: The optimal project maturity level

The common advice to “collaborate with universities” is often given without the most crucial piece of information: *when*. Approaching a university lab at the wrong stage of your project’s maturity can be a waste of time for everyone. University labs are not contract R&D shops or consultants for hire; they are engines for fundamental discovery and talent development. Understanding their function is key to a fruitful partnership.

The best framework to use here is Technology Readiness Levels (TRLs), a scale from 1 (basic principles observed) to 9 (actual system proven in an operational environment). University labs excel at TRLs 1-3. This is the realm of blue-sky research, exploring fundamental principles, and developing early proofs-of-concept. If your company needs to understand a brand-new material, explore a novel algorithm, or test a truly wild hypothesis, a university lab is the perfect partner. You gain access to brilliant minds and cutting-edge equipment at a fraction of the cost of building that capability in-house.

However, if your project is at TRL 4-6 (component validation, prototype in a lab environment) or higher, a university is likely the wrong partner. At this stage, you need engineering, optimization, and productization—skills better found in specialized design firms or your internal team. Asking a PhD student to optimize a manufacturing process is a misuse of their talent and the lab’s purpose. It’s also critical to clarify intellectual property (IP) ownership in a formal agreement *before* any work begins to avoid future conflicts.

The ideal collaboration is a relay race, not a three-legged race. A company can fund foundational research at the TRL 1-3 stage, and then, when a promising proof-of-concept emerges, the company’s internal team takes the baton to run the TRL 4-9 race toward commercialization. This respects the strengths of both academia and industry, creating a true win-win partnership.

Key Takeaways

- The gap between academic research and market success is not about a lack of ideas, but a lack of a rigorous commercial filtering process.

- Lab results are not guaranteed to work in the real world. Actively test for “Context Collapse” where environmental factors reduce or negate a promising effect.

- Use a “Ladder of Tooling” approach: match your manufacturing investment to your sales volume and validation level to avoid costly premature scaling.

Why does spending $10,000 on a mold only make sense if you sell over 1,000 units?

This question gets to the heart of one of the most critical and often overlooked stages of commercialization: the transition from prototype to production. Many startups and R&D departments, excited by a functional prototype, rush to mass production, only to be bankrupted by the high upfront cost of tooling. The $10,000 injection mold is a classic example. If your product sells for $20 with a $10 profit margin, you need to sell 1,000 units just to break even on the mold, not counting marketing, shipping, and other costs. If the market demand is only 500 units, you’ve made a catastrophic financial error.

The solution is to adopt a “Ladder of Tooling” mindset, where you match your manufacturing method and investment to your level of market validation. Instead of jumping straight to a steel injection mold, you climb the ladder, de-risking at each step. This approach is proven to work, as industry analysis from Highlight reveals that there is a 30-50% reduction in failure rates when companies implement comprehensive testing protocols before committing to expensive tooling. Each rung of the ladder allows you to test the product with real customers and gather feedback before making a bigger investment.

This disciplined approach prevents you from over-investing in an unproven product. The break-even calculation is crucial: for a high-end designer object selling for $500, selling just 20 units might justify a $10,000 mold. For a $5 widget, the same mold requires 2,000 sales. The “Ladder of Tooling” forces you to be honest about your unit economics and market size before you write a large check to a factory.

Action Plan: Your Ladder of Tooling for Manufacturing

- RTV silicone molds: Aim for 10-50 units for initial market testing and photo samples. This represents a low-cost ($100-$500) way to get something into people’s hands.

- 3D printed molds: Scale to short runs of up to 100 units. This is ideal for design validation and first-run production ($500-$2,000 investment).

- CNC-machined aluminum molds: Use for pre-production runs of 500-1,000 units. At this stage, you are testing the manufacturing process itself ($2,000-$5,000).

- Steel injection molds: Commit to this only when you have proven demand and are ready for mass production of 1,000+ units. This is the high-cost, high-volume step ($10,000+).

- Calculate break-even point: Before each step, recalculate your break-even point based on the tooling cost and your unit price to ensure the next investment is justified by sales data, not just hope.

How to build a functional smart textile prototype with a budget of under $500?

The idea of “smart textiles” sounds expensive, futuristic, and out of reach for anyone without a corporate R&D budget. But this is where the “Minimum Viable Phenomenon” (MVPhen) approach is most powerful. The goal is not to build a fully finished, market-ready garment. The goal is to reproduce the core “magical” effect of the research in the scrappiest way possible to demonstrate its potential. Can you make fabric change color with heat? Can you make it respond to touch? With today’s hobbyist electronics, the answer is a resounding yes—and for well under $500.

The key is to break down the desired effect into its basic components: a controller (the “brain,” like an Arduino or ESP32), the active materials (like thermochromic pigments or conductive thread), and a power source. By focusing only on demonstrating the phenomenon, you avoid the costs associated with wearability, durability, and aesthetics that come with product development. You are building a “lab on a swatch” that proves the concept and excites stakeholders.

This approach allows you to test the core ideas from dozens of academic papers without breaking the bank. The table below provides a shopping list for creating four different smart textile effects, showing how accessible this kind of R&D has become. Each one delivers a powerful “wow” moment for a fraction of the cost of traditional prototyping.

The Minimum Viable Phenomenon (MVPhen) Approach

Inspired by digital art, the MVPhen principle emphasizes interactivity and participation. Instead of building a full product, the creator isolates and reproduces the single most important phenomenon from a research paper in the scrappiest way possible. This allows artists and innovators to guide users (or stakeholders) to participate in the creative process, offering a personalized and enriched experience that demonstrates the core value with minimal resources, effectively testing the “magic” before investing in the “product.”

| Desired Effect | Controller | Materials/Components | Approx. Cost |

|---|---|---|---|

| Thermochromism | Arduino Nano ($25) | Thermochromic pigments, heating wire ($75) | $100 |

| Capacitive Touch | ESP32 ($15) | Conductive thread, copper tape ($50) | $65 |

| Electroluminescence | Arduino Uno ($30) | EL wire, inverter, battery pack ($120) | $150 |

| Motion Response | ESP32 with IMU ($35) | Flex sensors, conductive fabric ($85) | $120 |

How to Explain Complex Technical Concepts to Non-Experts in Under 2 Minutes?

The final, and perhaps most crucial, skill of the innovation broker is translation. You can find the most brilliant academic paper, validate it with a perfect MVPhen, and map out a flawless path to production, but if you can’t explain why it matters to your CEO, investors, or marketing team in under two minutes, it will die in the boardroom. This is not about “dumbing down” the science; it’s about elevating the value proposition.

The best translators use a three-part technique: Analogy, Benefit, and Vision. First, find a powerful analogy that connects the complex concept to something the audience already understands. Don’t explain the physics; explain what it’s *like*. Second, immediately connect that analogy to a concrete benefit. What problem does this solve? How does it make the customer’s life better? Third, paint a brief, compelling vision of the future this technology enables.

For example, don’t say “We are using materials with a piezoelectric effect.” Instead, try this:

(Analogy) “We’ve found materials that generate electricity when they’re squeezed or bent. Think of it like a sponge that gives off power instead of water.”

(Benefit) “This means we can create shoes that charge your phone as you walk, eliminating the need for chargers and cables.”

(Vision) “Imagine a world where your own movement powers your devices. That’s the future we can build.”

Here’s another example for a complex AI concept: a ‘generative adversarial network’ (GAN). Instead of explaining the neural network architecture, you can describe it to artists as: “Imagine two AIs playing a game of creative tennis. One AI (the ‘Generator’) hits an image over the net. The other AI (the ‘Critic’) is a world-class judge that says ‘nope, that’s a fake’ and hits it back. They play until the Generator creates an image so good the Critic can’t tell it from a real one. The final result is something neither could have created alone.” This explanation is memorable, accurate in spirit, and conveys the core collaborative-yet-adversarial dynamic.

The framework is here. The next step is to apply this critical filter to your own R&D pipeline and start separating the commercially viable signals from the academic noise. By adopting the mindset of an innovation broker—skeptical yet opportunistic, pragmatic yet visionary—you can systematically de-risk innovation and turn the vast ocean of academic research into your company’s most powerful competitive advantage.